The Missing Layer in Agentic Coding: Why Multi-Agent Systems Need a Governance Kernel

Anthropic’s 2026 Report Identifies 8 Trends. All 8 Need the Same Thing.

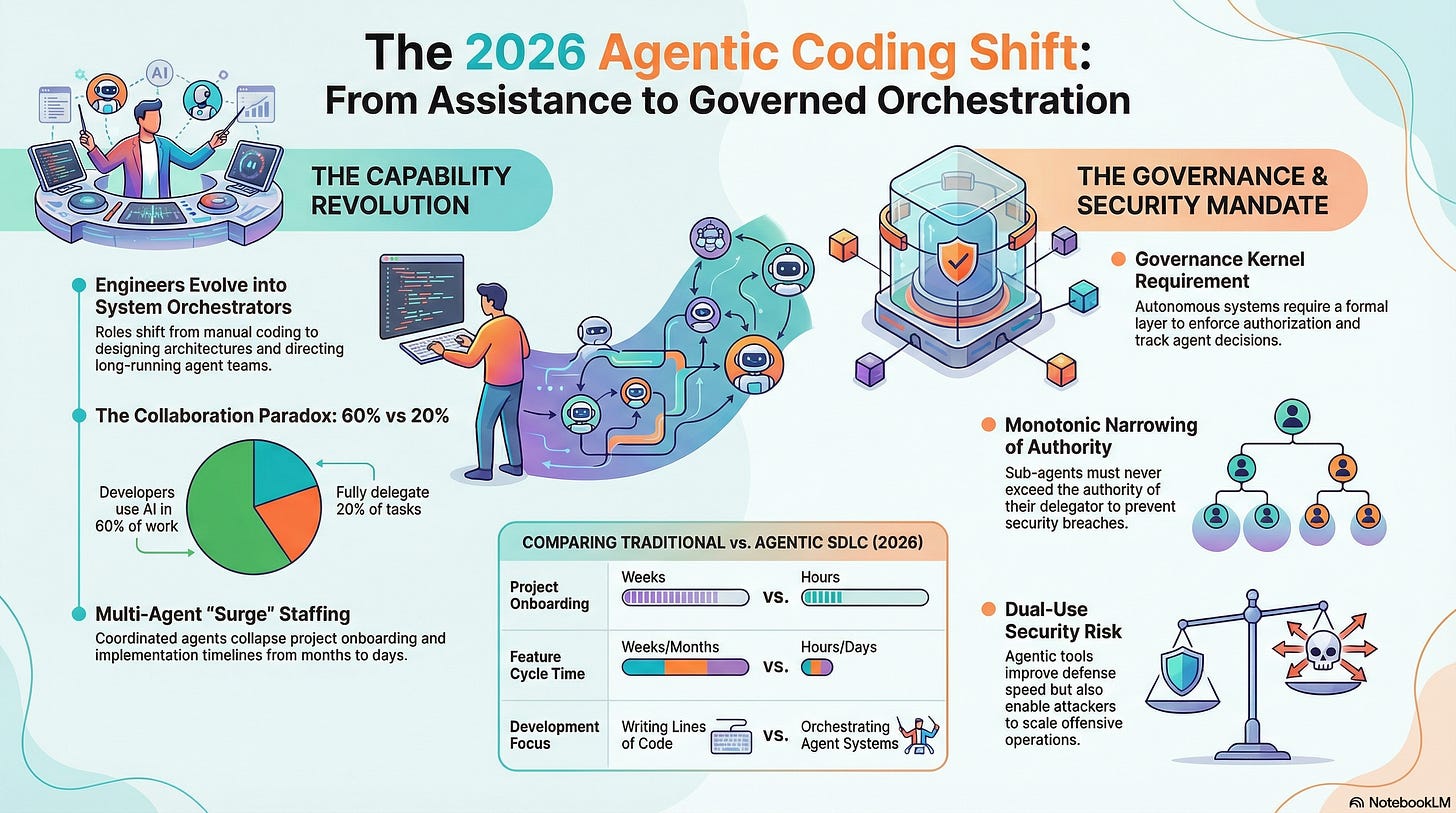

Anthropic just published their 2026 Agentic Coding Trends Report. It’s a clear-eyed look at where software development is heading: engineers becoming orchestrators, single agents becoming coordinated teams, tasks expanding from minutes to days, and non-technical users building their own tools. The report identifies eight trends that will define this year.

Reading it, one thing stands out. Every trend assumes agents will act correctly. That they’ll access only the data they should. That they’ll respect boundaries between systems. That when a sub-agent is delegated a task, it won’t exceed its scope. That when something goes wrong at hour 68 of a 72-hour autonomous run, someone can trace what happened.

None of that happens automatically. All of it requires a governance layer that doesn’t exist in most agentic systems today.

We’ve been building that layer. It’s called KROG — a formal governance kernel that sits between any autonomous agent and the action it wants to take. Every request is evaluated through a 9-step authorization chain before the agent can act. Every decision is recorded in a tamper-evident hash chain. Every delegation narrows scope and never expands it. Every piece of data lives in a sensitivity compartment with a minimum authority floor.

Here’s how KROG maps to each of Anthropic’s eight trends — and why Trend 8, on security, is where governance becomes non-negotiable.

Trend 1: The Software Development Lifecycle Changes Dramatically

Anthropic predicts that engineers shift from writing code to orchestrating agents that write code. The role becomes architecture, system design, and strategic decisions about what to build.

This is true, and we’re living it. KROG itself — 149 database models, 180+ API routes, 21 MCP tools, a full governance dashboard with seven views, 12 export formats, SHACL validation, provenance chains — was built through agent orchestration. The conversation isn’t “write me a function.” It’s “here’s the governance architecture, here are the phases, execute systematically.”

But the report’s framing misses something. When engineers orchestrated human teams, those teams had employment contracts, access controls, NDAs, and HR policies governing what they could do. When engineers orchestrate agent teams, what governs what the agents can do?

KROG provides that governance. The same way an organization has policies about which employees can access which systems, KROG defines which agents can access which data, through which operations, under what legal basis, with what authority level. The engineer orchestrating agents isn’t just directing work — they’re defining the governance context within which work happens.

Trend 2: Single Agents Evolve into Coordinated Teams

The report describes multi-agent architectures where an orchestrator coordinates specialized sub-agents working in parallel, each with dedicated context. Fountain’s example shows a central orchestration agent coordinating screening, document generation, and sentiment analysis agents.

This is where governance becomes structural, not optional. When you have one agent, you can manually verify what it did. When you have five agents running in parallel, each delegating to sub-agents, you have a tree of autonomous actors making decisions about data.

KROG’s delegation chain is designed for exactly this topology. The orchestrator agent has a T-type authority level — say T2, with access to customer data for the purpose of workforce management. When it delegates to the screening agent, KROG creates a delegation record with monotonically narrowed scope: the screening agent gets T3 authority, limited to candidate data only, for screening activities only. The document generation agent gets T4, limited to template data and generated documents, no access to raw candidate information.

Monotonic narrowing means a sub-agent can never have more authority than its delegator. The screening agent cannot grant itself access to financial data just because the orchestrator has access to financial data. The chain only narrows. If the orchestrator’s authorization is revoked — say the contract with the staffing client ends — KROG cascades the revocation through the entire delegation tree. Every sub-agent loses access simultaneously. No orphaned authorizations.

Without this, multi-agent coordination is a privilege escalation risk. Agent A delegates to Agent B with loose constraints. Agent B delegates to Agent C with even looser constraints. By the time you’re three levels deep, the effective permissions bear no relation to what was originally intended. KROG’s formal delegation model prevents this by construction.

Trend 3: Long-Running Agents Build Complete Systems

The report cites Rakuten’s example: Claude Code completing a complex implementation in seven hours of autonomous work. Anthropic predicts agents will work for days, building entire applications with periodic human checkpoints.

When an agent works autonomously for 72 hours, it makes thousands of decisions. Which files to read. Which APIs to call. Which data to access. Which dependencies to install. If something goes wrong — a security vulnerability introduced, a data leak, a corrupted state — how do you trace what happened?

Logs are not enough. Logs record events. They don’t record the governance context of each event: who authorized this action, under what policy, with what constraints, through which delegation chain, against which version of the rules.

KROG’s provenance chain records all of this. Every authorization decision the agent makes goes through the Gateway, and every Gateway decision is recorded as a hash-chained event. The event captures: who requested the action, what they requested, what the Gateway decided, which step in the 9-step evaluation produced the outcome, which PolicyCard version was active, and a SHA-256 hash linking this event to the previous event in the chain.

After 72 hours of autonomous work, you don’t just have a log — you have a cryptographically verified history of every governance decision, traceable back to the contract clauses and policy rules that authorized each action. If the agent accessed data it shouldn’t have at hour 43, the provenance chain shows exactly when, what it accessed, and why the Gateway permitted it (or why the permission was incorrect, pointing to the policy that needs fixing).

This is the compounding value of governance memory. After a thousand decisions, the provenance chain is a compliance record. After ten thousand, it’s a knowledge base. AI agents can query their own history to understand what they’ve been permitted and denied, learning from precedent rather than discovering constraints through trial and error.

Trend 4: Human Oversight Scales Through Intelligent Collaboration

The report identifies a critical insight: engineers use AI in 60% of their work but can fully delegate only 0-20% of tasks. Effective AI collaboration requires active human participation. The prediction is that agents will learn when to ask for help, and human oversight will shift from reviewing everything to reviewing what matters.

KROG’s ESCALATE decision is this mechanism formalized. The Gateway doesn’t just PERMIT or DENY — it has a third option. When the requesting agent’s confidence score is below the threshold, or the T-type authority is insufficient but close to the boundary, or the data category falls in an ambiguous zone between compartments, the Gateway escalates to a human with sufficient authority.

This means human attention is directed precisely where the governance system identifies uncertainty. The compliance officer doesn’t review every data access — they review the ones where the automated evaluation couldn’t reach a confident decision. The ones at the boundary between permission and prohibition. The ones where the delegation chain is technically valid but the scope narrowing leaves an edge case.

The report says “human oversight shifts from reviewing everything to reviewing what matters.” KROG operationalizes this: the Gateway handles the clear cases (PERMIT or DENY with high confidence), and humans handle the ambiguous ones (ESCALATE).

Trend 5: Agentic Coding Expands to New Surfaces and Users

The report predicts coding capabilities democratizing beyond engineering. Non-technical teams gain the ability to automate workflows and build tools. Anthropic’s own legal team reduced marketing review turnaround from days to hours by building Claude-powered workflows.

KROG’s MCP interface makes governance accessible to these non-technical users without requiring them to understand the underlying deontic logic. A lawyer building a contract review tool doesn’t need to know about T-types or R-types. Their AI agent calls krog_check_authorization before accessing client data, receives a PERMIT with constraints, and operates within those constraints. The governance happens transparently.

The YAML PolicyCard format is specifically designed for LLM comprehension:

yaml

specification: "Client Contract Review"

actors:

- id: "legal-assistant-agent"

t_type: T4

permissions:

- action: "Consult"

target: "LegalData"

conditions: ["active_engagement", "attorney_supervision"]

prohibitions:

- action: "Transfer"

target: "FinancialData"

to: "any_external_party"The agent reads this and knows: I can consult legal data while the engagement is active and an attorney is supervising. I cannot transfer financial data externally under any circumstances. No RDF parsing. No ontology reasoning. Just structured text that any LLM can understand.

The import pipeline extends this further. A DPO with an Excel spreadsheet of processing activities uploads it, and KROG’s mapper suggests standardized vocabulary mappings for each term. “Salary processing” maps to HumanResourceManagement. “Home address” maps to PhysicalAddress. The domain expert doesn’t learn the vocabulary — the system translates for them.

Trend 6: Productivity Gains Reshape Software Development Economics

The report notes that 27% of AI-assisted work consists of tasks that wouldn’t have been done otherwise. Agents make previously non-viable projects feasible.

KROG is itself an example. Building a formal governance kernel with SHACL validation, compliance dependency graphs, 12 export formats, W3C ontology integration, and a full dashboard — this would have been a multi-year, multi-team project using traditional development. As an agent-orchestrated build, it’s weeks.

But KROG also enables this trend for others. Organizations that previously couldn’t afford formal data governance — because manually maintaining processing specifications, data flow inventories, and compliance documentation was too expensive — can now automate the entire lifecycle. The governance infrastructure that was only viable for large enterprises becomes accessible to any organization.

Trend 7: Non-Technical Use Cases Expand Across Organizations

The report predicts domain experts implementing solutions directly, removing the bottleneck of filing a ticket and waiting for development teams.

This is where KROG’s import pipeline and mapping become critical. The DPO who understands data protection law shouldn’t need an engineer to set up governance rules. They upload their existing documentation, map terms to vocabulary through a guided interface, and KROG generates the ProcessingSpecifications, PolicyCards, and compartment assignments. The domain expert’s knowledge flows directly into machine-enforceable governance.

The compliance dependency graph — a ReactFlow visualization showing how DPIAs connect to specifications, specifications connect to PolicyCards, PolicyCards connect to compartments, contracts connect to authorization rules — gives non-technical stakeholders a visual map of their governance posture without reading JSON or RDF.

Trend 8: Security — Where Governance Becomes Non-Negotiable

The report’s final trend is the most important, and it’s where KROG isn’t just relevant but essential.

Anthropic states it directly: “The same capabilities that help defenders are also capable of helping attackers scale their efforts.” And: “Teams that use agentic tools to bake security in from the start will be better positioned to defend against adversaries using the same technology.”

Consider what a multi-agent system looks like without governance:

Agent A is an orchestrator with broad system access. It delegates to Agent B for data processing and Agent C for external API calls. Agent B creates Agent D as a helper for a specific subtask. There are now four agents operating autonomously, each with implicitly inherited permissions, no formal scope boundaries, no audit trail of authorization decisions, and no mechanism to revoke access if any agent is compromised.

An attacker who compromises Agent D — through prompt injection, through a poisoned dependency, through a malicious API response — now has whatever access Agent D inherited from Agent B, which inherited from Agent A. There’s no record of what Agent D accessed before the compromise was detected. There’s no way to verify that the data Agent D produced wasn’t tainted. There’s no automatic revocation of Agent D’s sub-delegations (if it created any).

Now consider the same system with KROG:

Agent A (T2 authority) delegates to Agent B (T3, narrowed to data processing operations on customer data). Agent B delegates to Agent D (T4, narrowed to a specific data category within a single compartment). Every delegation is recorded. Every access request by every agent goes through the Gateway’s 9-step evaluation. Every decision is hash-chained into the provenance record.

If Agent D is compromised:

It can only access data within its compartment and T-type — the Gateway denies anything outside its scope, regardless of how the request is framed

Its delegation chain is traceable — the provenance record shows exactly who delegated to it, with what scope, and when

Revocation cascades automatically — revoking Agent D’s delegation immediately invalidates any sub-delegations it created

The audit trail is tamper-evident — the attacker cannot delete or modify provenance records because each event’s hash depends on the previous event’s hash

The blast radius is contained — Agent D’s T4 authority in a single compartment means the worst case is exposure of that compartment’s data, not the entire system

This is what “baking security in from the start” actually means for agentic systems. Not adding security reviews after the code is written. Not scanning for vulnerabilities in generated code. Building the authorization model into the agent architecture itself, so that security constraints are evaluated before every action, not discovered after a breach.

The report’s priority #4 for 2026 is “embedding security architecture as part of agentic system design from the earliest stages.” KROG is that security architecture. It’s not a monitoring tool bolted onto an existing system. It’s the governance kernel that defines what every agent can do before it does it.

The Governance Gap in Agentic Systems

All eight trends in Anthropic’s report point to the same structural need: as agents become more autonomous, more numerous, and more capable, the governance of what they can do becomes the critical infrastructure.

The report acknowledges this implicitly. “Human oversight scales through intelligent collaboration” assumes a system that knows when to escalate. “Multi-agent coordination” assumes a delegation model with scope boundaries. “Long-running agents” assume an audit trail that survives 72 hours of autonomous operation. “Security-first architecture” assumes authorization happens before action, not after.

These assumptions need to be implemented, not just assumed. That implementation is a governance kernel: formal rules extracted from contracts and policies, compiled into machine-readable policies, enforced at runtime on every request, recorded in a tamper-evident chain, and queryable by both humans and AI agents.

The organizations that build this governance layer in 2026 — while their agents are still manageable and their data processing is still traceable — will have a compounding advantage. Every governance decision their system records makes the next decision better informed. Every delegation chain validates the organizational structure. Every provenance event adds to an institutional memory that survives personnel changes, model updates, and organizational restructuring.

The organizations that wait will discover that retrofitting governance onto a fleet of autonomous agents operating across systems, data categories, and organizational boundaries is orders of magnitude harder than building it in from the start.

The agentic future Anthropic describes is coming. The question isn’t whether your agents will be capable enough. It’s whether they’ll be governed enough.