Introducing KROG Prover: A Rules Small Language Model

Why I am building a specialized model that reads contracts and extracts mathematical structure

Large language models are impressive generalists. They can write poetry, debug code, and summarize documents. But when it comes to understanding the precise obligations and permissions buried in legal text, they hallucinate. They make things up. They miss negations. They conflate “shall” with “may.”

This matters. Contracts govern trillions of dollars in transactions. A misread clause isn’t a minor inconvenience—it’s a lawsuit waiting to happen.

So I asked: what if we built a small model that does one thing exceptionally well?

The Problem with General-Purpose LLMs

When you ask GPT-4 or Claude to analyze a contract clause like:

“The Provider shall deliver the Software within 30 days, notwithstanding any force majeure event.”

...you get a reasonable summary. But you don’t get mathematical precision. You don’t get:

T-type T5 (Must Act) for the Provider

R-type R11 (Authority relationship with the receiving party)

Temporal pattern:

within(30, days)Modal operator: O() obligation with exception clause

General models approximate. KROG Prover extracts.

Dual-Inference Architecture

KROG Prover combines two systems:

KUIE (Symbolic Engine) — Deterministic, rule-based, under 2 milliseconds. Handles clear-cut clauses with zero hallucination. When the language is unambiguous, symbolic reasoning is both faster and more reliable.

KROG LLM (Neural Network) — A small language model (350M–1.3B parameters) trained specifically on legal text and KROG structure. Handles ambiguity, implicit obligations, and the messy reality of natural language contracts.

The consensus engine compares both outputs. When they agree, confidence is high. When they disagree, the system knows to flag for human review rather than guess.

Why Small?

Three reasons:

1. Specialization beats scale. A 1B model trained on millions of contract clauses outperforms a 100B generalist on this specific task. We don’t need the model to write haikus. We need it to recognize that “shall not unreasonably withhold” creates a qualified prohibition.

2. Deployability. Small models run on-premise, in browsers, on edge devices. No API calls, no data leaving your infrastructure, no latency.

3. Transparency. Every prediction maps to formal KROG structure. You can trace exactly why the model classified a clause as creating an obligation versus a permission. No black boxes.

Mathematically-Grounded Confidence

Here’s what makes KROG Prover different from other legal AI: it knows when it doesn’t know.

We’ve integrated information-theoretic hallucination detection based on recent research. The system computes an Information Sufficiency Ratio (ISR): a mathematical measure of whether the contract text provides enough evidence to support the prediction.

ISR ≥ 1.0: Sufficient information. Answer with confidence.

ISR < 1.0: Insufficient information. Abstain or flag for review.

This isn’t a confidence score pulled from thin air. It’s derived from the relationship between prior probability (how rare is this R-type?) and the information gain from the actual contract text.

For rare but critical patterns like R27 (Deadlock), where two parties have mutually exclusive obligations, the model requires proportionally more evidence before making a claim. As it should.

What’s Next

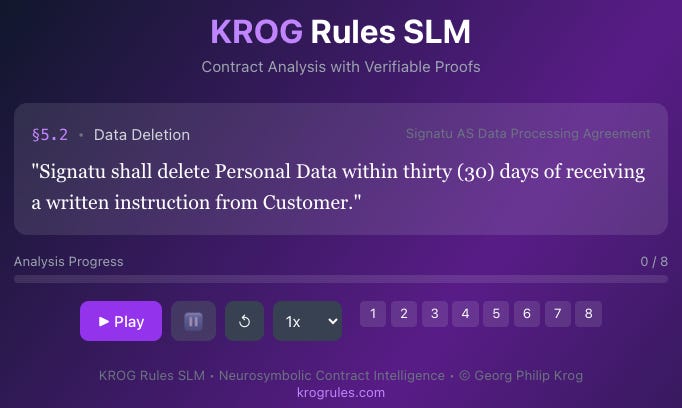

KROG Prover is currently in development. We’re training on hundreds of thousands of contract clauses, using a joint-embedding approach that learns the deep relationship between legal language and KROG formalism.

The vision: upload a contract PDF, get back a complete KROG analysis. Every obligation identified. Every permission mapped. Every potential conflict flagged. All grounded in the same mathematical framework that handles contracts and regulations.

KROG is a universal framework for rules and authorization. Learn more at KROG Rules

Tags: #LegalTech #AI #Contracts #NLP #SmallLanguageModels #KROG