EU AI Act Decoded: A Compact Guide to AI Risk Levels

The European Union's AI Act introduces a nuanced, risk-based approach to regulating artificial intelligence.

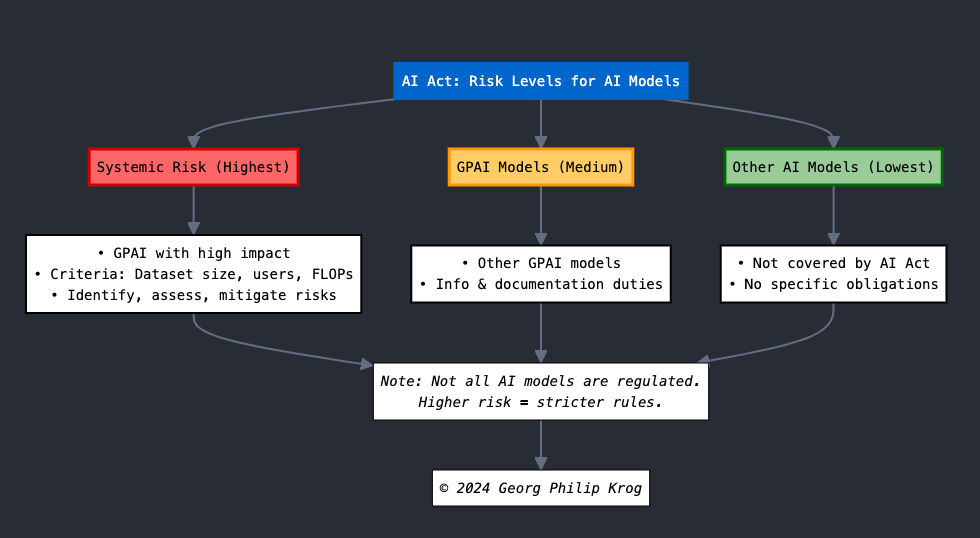

Today, I am breaking down these risk levels with a clear, concise infographic created by Georg Philip Krog.

The Three Risk Levels in a Nutshell

Our compact infographic illustrates the three main risk categories under the EU AI Act:

Systemic Risk (Highest Level)

Applies to: General Purpose AI with high impact

Key points: Assessed on dataset size, users, FLOPs; Requires risk identification and mitigation

GPAI Models (Medium Level)

Applies to: Other General Purpose AI models

Key point: Information and documentation obligations

Other AI Models (Lowest Level)

Status: Not covered by the AI Act

Implication: No specific obligations

Why This Matters

The EU AI Act's tiered approach balances innovation with protection. Understanding where your AI systems fall within this framework is crucial for compliance and risk management.

Signatu's Role

At Signatu, we're developing a standard machine-readable vocabulary to streamline AI compliance documentation. Our goal is to make navigating these regulations more manageable for organizations of all sizes.

What challenges do you foresee in classifying your AI systems under this framework? We'd love to hear your thoughts in the comments.

© 2024 Georg Philip Krog