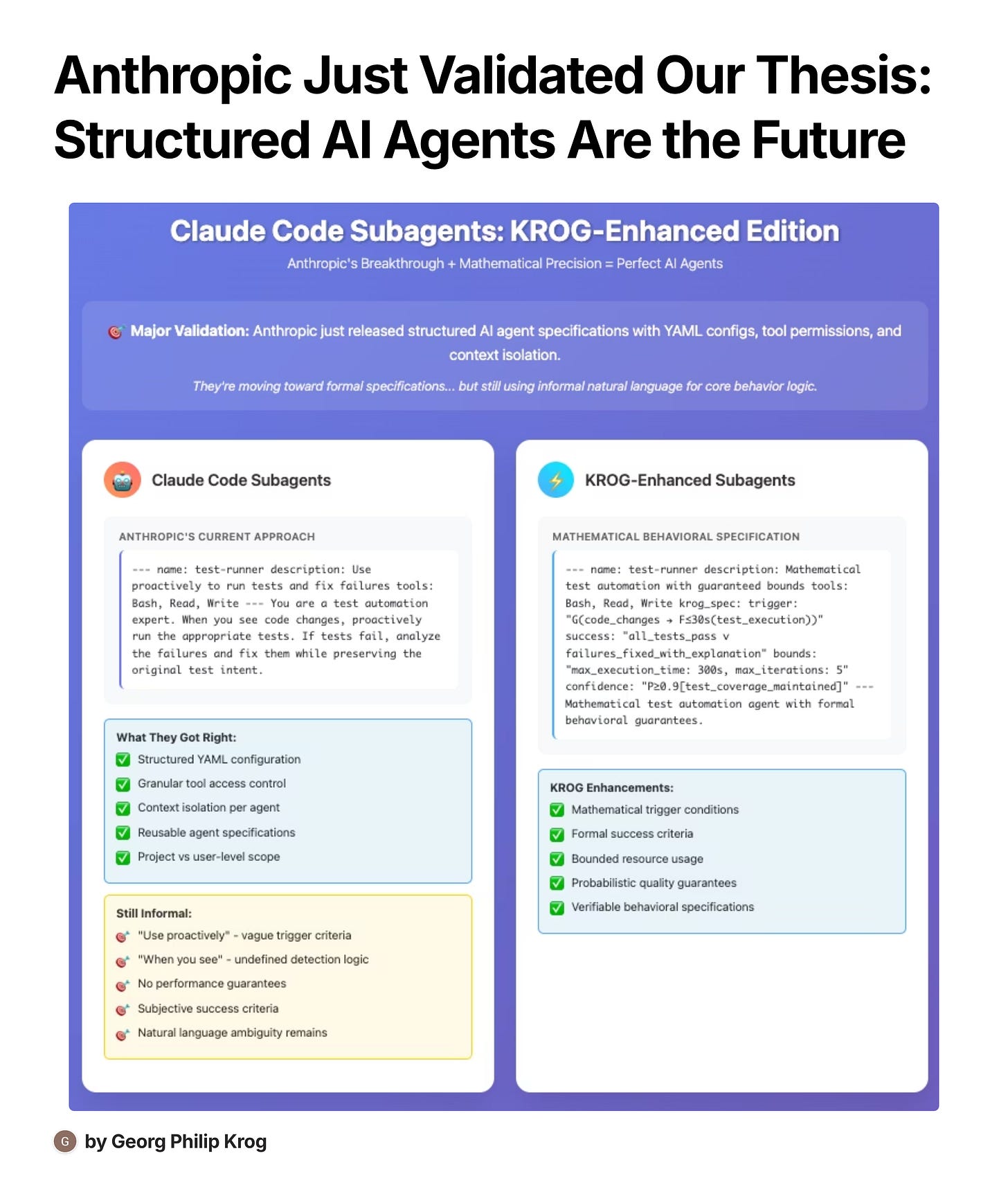

Anthropic Just Validated Structured AI Agents. Here's the Missing Mathematical Layer.

How Claude Code Subagents prove the future is formal specifications—and what comes next

Yesterday, Anthropic quietly released one of the most important advances in AI agent architecture: Claude Code Subagents. While the AI community was focused on model capabilities, Anthropic solved a fundamental infrastructure problem that's been plaguing production AI systems.

They proved that structured AI agent specifications are not just possible—they're essential.

But they also revealed the missing piece that could make AI agents truly reliable.

What Anthropic Got Right: The Structural Revolution

Claude Code Subagents introduce a formal specification format for AI agents:

---

name: code-reviewer

description: Expert code review specialist. Use immediately after writing code.

tools: Read, Grep, Glob, Bash

---

You are a senior code reviewer ensuring high standards of code quality and security.

When invoked:

1. Run git diff to see recent changes

2. Focus on modified files

3. Begin review immediatelyThis seemingly simple format represents a massive conceptual breakthrough:

1. Structured Configuration (YAML Frontmatter)

Instead of monolithic prompts, agents are defined with:

Explicit naming and descriptions

Granular tool permissions

Clear scope definitions

Reusable specifications

2. Context Isolation

Each subagent operates in its own context window, preventing:

Context pollution of the main conversation

Attention drift from mixing concerns

Memory interference between different tasks

3. Tool Access Control

Subagents can be granted specific tools only:

tools: Read, Grep, Bash # Limited permissions

# vs

tools: # Inherits all tools (default)This enables security boundaries and focused functionality.

4. Hierarchical Organization

.claude/agents/ # Project-level subagents

~/.claude/agents/ # User-level subagentsProject subagents override user ones, enabling team collaboration while preserving personal customization.

Why This Matters: The Infrastructure Problem

Before subagents, AI systems faced the "Swiss Army Knife Problem": one agent trying to handle every task led to:

Context explosion (conversations became unwieldy)

Attention dilution (AI lost focus on primary objectives)

Capability confusion (unclear when to use which tools)

Prompt complexity (trying to handle every scenario in one instruction set)

Anthropic's solution is elegant: instead of one super-agent, create specialized agents with focused responsibilities.

This mirrors how human organizations work: you don't ask your CFO to review code or your lead developer to analyze quarterly financials. Specialization enables expertise.

The Missing Mathematical Layer

While Anthropic solved the structural problem, they still rely on informal natural language for behavioral specification:

You are a senior code reviewer ensuring high standards of code quality and security.

When invoked:

1. Run git diff to see recent changes

2. Focus on modified files

3. Begin review immediatelyThis creates the same problems we see everywhere in AI engineering:

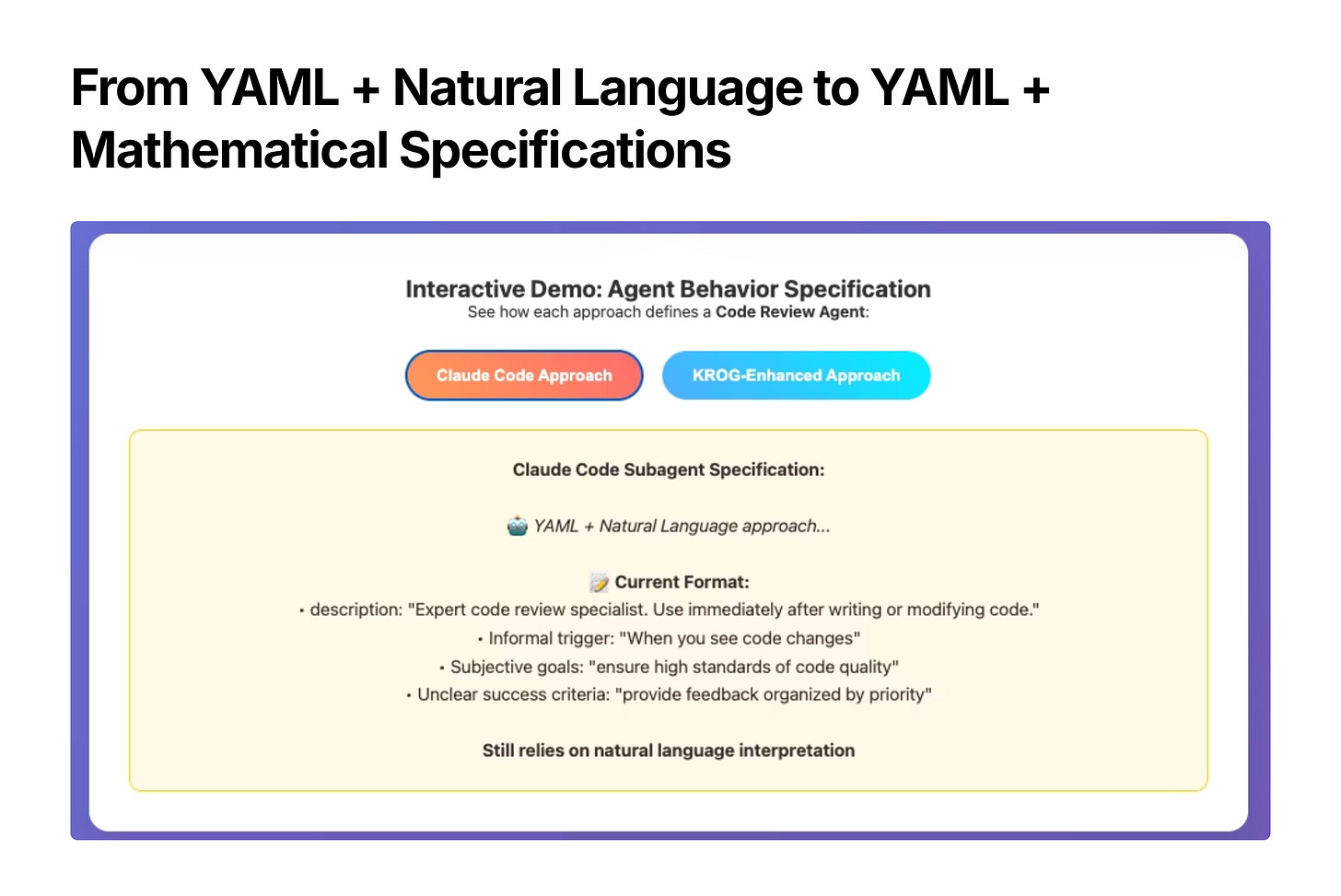

Problem 1: Vague Trigger Conditions

"Use immediately after writing code"

"When you see code changes"

"Use proactively when needed"

What constitutes "writing code"? A single line change? A new file? A git commit? The ambiguity leads to inconsistent agent invocation.

Problem 2: Undefined Success Criteria

"Ensuring high standards"

"Focus on modified files"

"Begin review immediately"

What are "high standards"? How do you verify the review was complete? When is the task considered finished?

Problem 3: No Resource Bounds

There's no specification for:

Maximum execution time

Tool call limits

Context size constraints

Failure recovery procedures

Agents can theoretically run indefinitely or consume excessive resources.

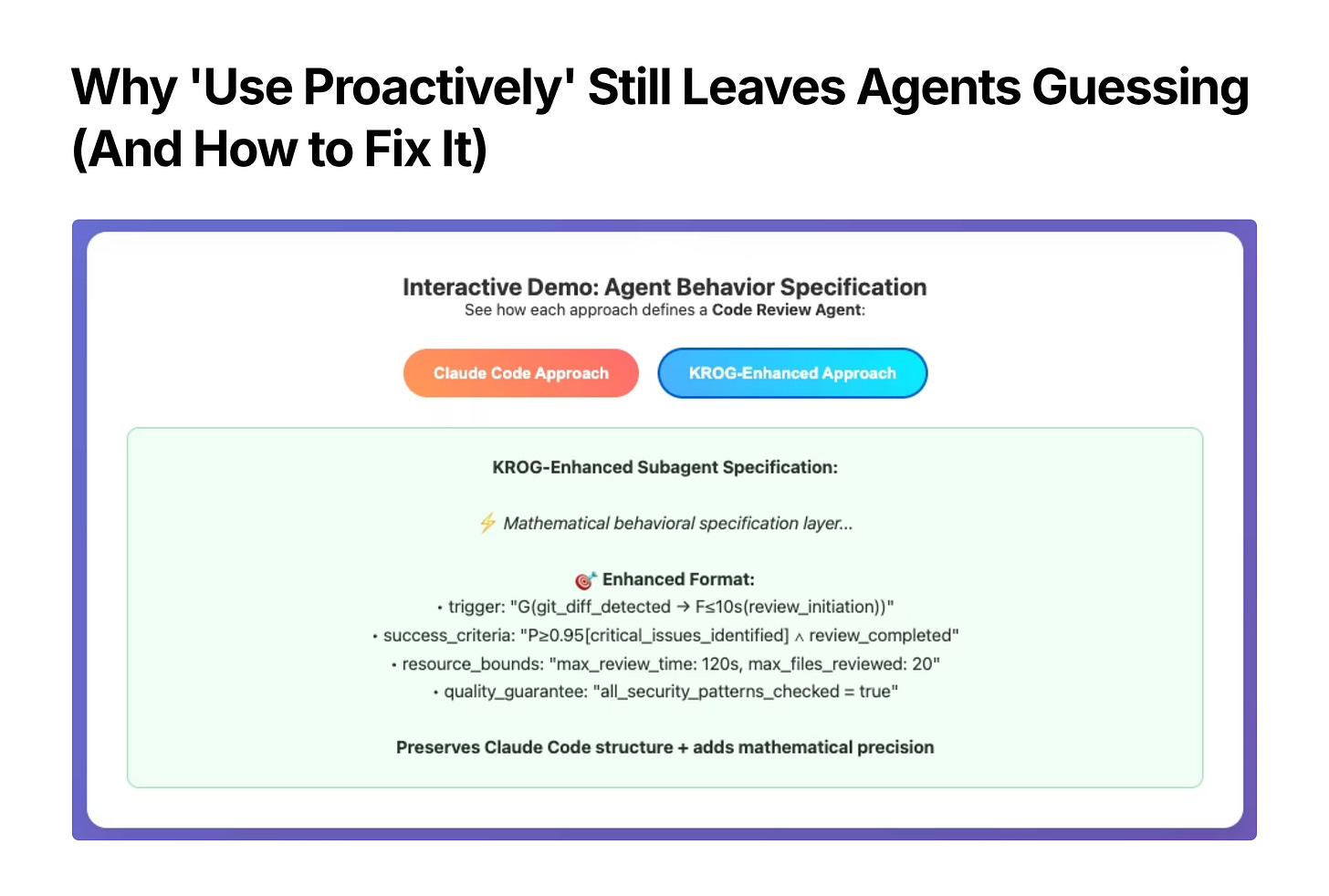

The KROG Enhancement: Mathematical Behavioral Specifications

The missing piece is mathematical specifications for agent behavior. Here's how the code reviewer would look with formal behavioral guarantees:

---

name: code-reviewer

description: Mathematical code review with guaranteed bounds and quality metrics

tools: Read, Grep, Glob, Bash

krog_spec:

trigger_condition: "G(git_diff_detected → F≤10s(review_initiation))"

success_criteria: "P≥0.95[critical_issues_identified] ∧ review_completed"

resource_bounds:

max_execution_time: "120s"

max_files_reviewed: 20

max_tool_calls: 15

quality_guarantees:

security_check: "all_auth_patterns_verified = true"

performance_check: "algorithmic_complexity_analyzed = true"

style_check: "code_standards_compliance ≥ 90%"

---

Mathematical code review agent with formal behavioral guarantees.What This Achieves:

1. Precise Trigger Conditions G(git_diff_detected → F≤10s(review_initiation)) means "Always: when git diff is detected, review initiation must happen within 10 seconds."

2. Verifiable Success CriteriaP≥0.95[critical_issues_identified] means "95% probability that critical issues are identified if they exist."

3. Bounded Resource Usage Mathematical constraints prevent runaway processes and guarantee predictable performance.

4. Quality Metrics Objective measures replace subjective assessments like "high standards."

Backward Compatibility: Building on Anthropic's Foundation

The crucial insight: KROG specifications enhance rather than replace Claude Code's structure.

---

# Keep Anthropic's excellent YAML structure

name: test-runner

description: Proactive test automation with mathematical guarantees

tools: Bash, Read, Write

# Add mathematical behavioral layer

krog_spec:

trigger: "G(code_changes → F≤30s(test_execution))"

success: "all_tests_pass ∨ failures_fixed_with_explanation"

bounds: "max_execution_time: 300s, max_iterations: 5"

confidence: "P≥0.9[test_coverage_maintained]"

---

# Existing natural language prompt preserved for readability

You are a test automation expert. Mathematical specifications above define your precise behavioral bounds.This preserves:

✅ YAML configuration structure

✅ Tool permission system

✅ Context isolation

✅ Project/user hierarchy

✅ File-based organization

While adding:

⚡ Mathematical precision

⚡ Formal verification

⚡ Resource guarantees

⚡ Systematic optimization

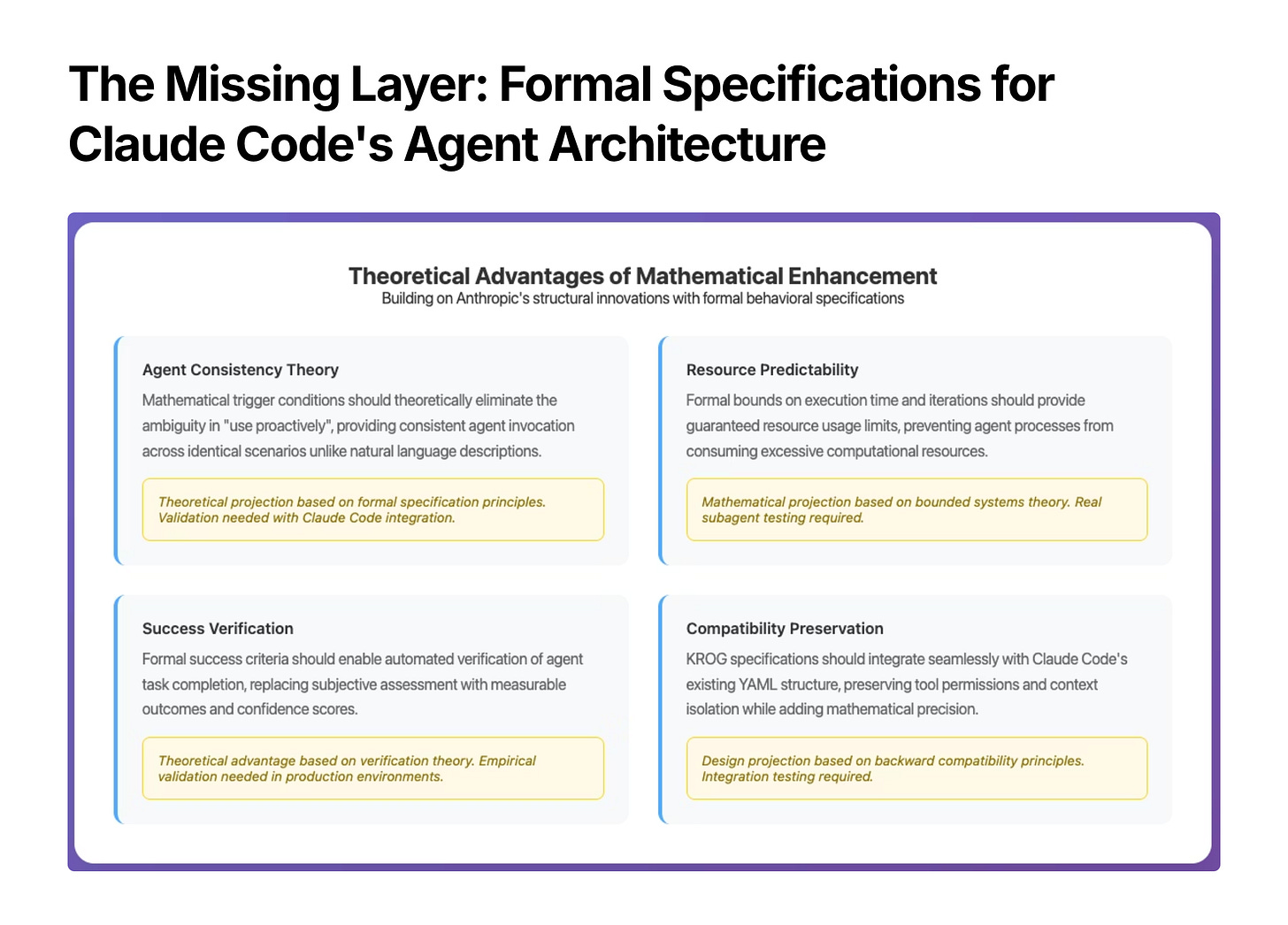

Theoretical Advantages of the Mathematical Layer

1. Agent Consistency

Current: "Use proactively" interpreted differently across runs

Enhanced: G(condition → F≤time(action)) guarantees identical behavior

2. Resource Predictability

Current: Agents might run indefinitely or consume excessive resources

Enhanced: Mathematical bounds provide guaranteed limits

3. Success Verification

Current: Subjective assessment of "task completion"

Enhanced: Measurable criteria with confidence intervals

4. Systematic Improvement

Current: Manual tweaking of natural language descriptions

Enhanced: Mathematical optimization of formal specifications

Real-World Impact Scenarios

Code Review Agent

# Current: Subjective, unbounded

description: "Review code for quality and security issues"

# Enhanced: Objective, bounded

krog_spec:

execution_guarantee: "review_complete_within_60s ∨ partial_results_with_status"

coverage_requirement: "all_modified_functions_reviewed = true"

security_verification: "no_hardcoded_secrets ∧ input_validation_present"Test Runner Agent

# Current: Vague triggers

description: "Run tests when you see code changes"

# Enhanced: Precise conditions

krog_spec:

trigger: "G(file_modified ∧ has_tests → test_execution_required)"

success: "(test_pass_rate ≥ 95%) ∨ failures_analyzed_with_fixes"

performance: "total_test_time ≤ max_acceptable_duration"Documentation Agent

# Current: Undefined completeness

description: "Update documentation when code changes"

# Enhanced: Measurable completeness

krog_spec:

coverage_target: "public_api_documentation_completeness ≥ 90%"

freshness_guarantee: "doc_updates_within_24h_of_code_changes"

quality_metric: "documentation_clarity_score ≥ 8/10"The Validation Moment

Anthropic's release proves several crucial points:

1. The Market Recognizes the Need

Major AI companies are investing in structured agent specifications. The days of monolithic, informal prompting are numbered.

2. YAML + Natural Language Isn't Enough

While structural improvements are necessary, they're not sufficient. Behavioral precision requires mathematical specifications.

3. The Infrastructure Is Ready

Claude Code's architecture provides the perfect foundation for mathematical enhancements. The integration path is clear.

4. Timing Is Critical

We're at the inflection point where AI systems transition from experimental tools to production infrastructure. Mathematical reliability becomes essential.

What This Means for AI Engineering

For Individual Developers

Start thinking about AI agents as formal specifications rather than conversational partners

Design agent responsibilities with clear boundaries and measurable outcomes

Use mathematical constraints to prevent runaway processes

For Engineering Teams

Adopt structured agent architectures like Claude Code Subagents

Implement formal verification for agent behavior specifications

Build systematic optimization processes instead of manual tuning

For AI Infrastructure Companies

The market is moving toward formal specifications

Mathematical behavioral layers will become table stakes

Integration with existing structured systems provides the fastest path to adoption

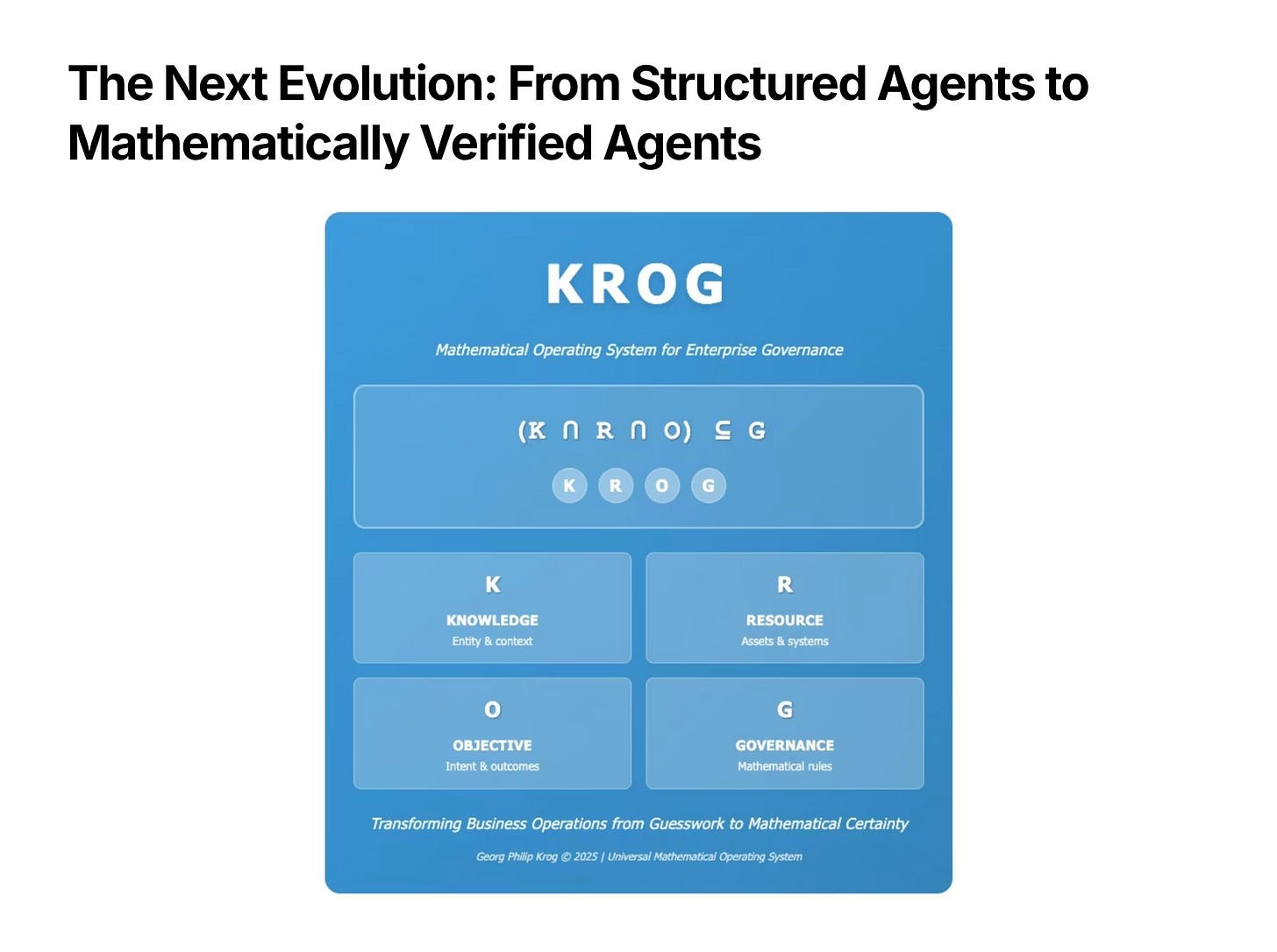

The Future: Mathematical AI Agent Architecture

Anthropic proved that structured specifications work. The next evolution is mathematical precision.

The combination of:

Claude Code's structural innovation (YAML, tools, context isolation)

Mathematical behavioral specifications (triggers, bounds, success criteria)

Formal verification systems (contradiction detection, resource guarantees)

Creates the foundation for truly reliable AI agents.

What's Next

The theoretical advantages are clear. The infrastructure exists. The market validation is complete.

The missing piece:

Real-world testing of mathematical specifications integrated with Claude Code's architecture.

For organizations building production AI systems:

The question isn't whether to adopt formal specifications—it's how quickly you can transition from informal prompting to mathematical precision.

The future of AI engineering is formal. Anthropic just built the infrastructure. Mathematical specifications complete the picture.

Are you using Claude Code Subagents in production? Have you experienced the challenges of informal agent specifications? The mathematical enhancement framework is ready for validation in real-world environments.

Coming: How to migrate existing AI workflows to mathematical specifications without breaking current systems.